MNLAB Meetup – Kubernetes

What did we talk about?

This week, the subject of our MNLAB meetup was Kubernetes! Well…, not only Kubernetes but also a few other topics which are included in the following presentation title. We started with a short history of how things evolved from the bare-metal age, to what we call today serverless. Then we continued with containers, container runtimes, container orchestration and Kubernetes. Finally, we closed the meetup with some humorous quotes and pics regarding computer science and “abstractions”.

Download

- the presentation .pptx file from here (~23MB)

- or the .pdf version from here (~4MB but with no embedded video)

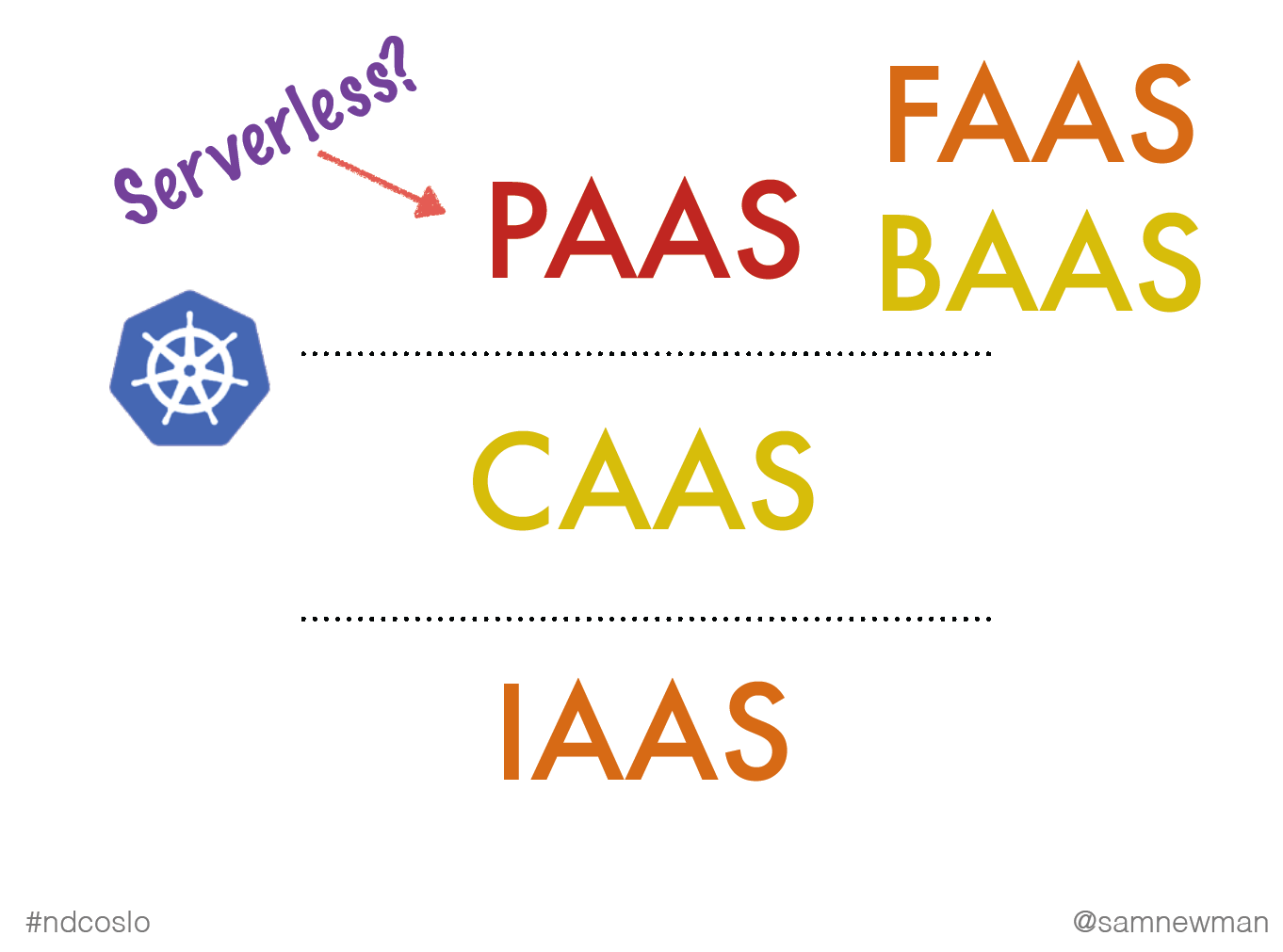

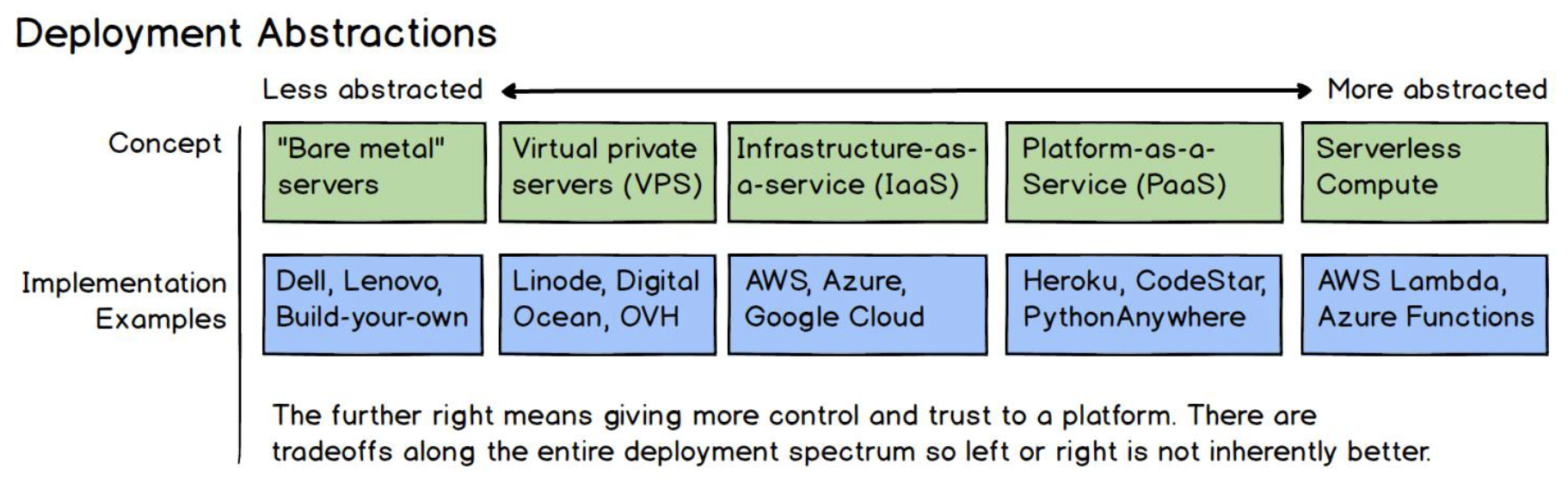

*aaS – what should I remember?

That during the evolution of all these years we’ve been searching for the right abstraction for developers to write apps and this seems to be PaaS…

and as Sam Newman says in the following video: “it’s all about abstractions”.

Containers – what should I remember?

- They are NOT virtual machines

- They are processes born from tarballs, anchored to namespaces and controlled by cgroups.

- Linux containers (LXC, LXD) existed before Docker

- There have been some container-runtime wars and this has had an effect to the history of Kubernetes

Kubernetes – what should I remember?

- it is a platform where you can run container workloads

- it’s easier for everyone to understand abstractions he/she builds or grow organically within an organization

- the key is to understand that Kubernetes brings a lot of abstractions to solve a lot of common problems

- it’s not the perfect tool for every job. Your case might need something simpler. Kubernetes is a good solution in case your apps face traffic spikes and you need scaling solutions.

- Kubernetes also offers solutions to deployments problems (green-blue, canary, rolling updates, rolling-back)

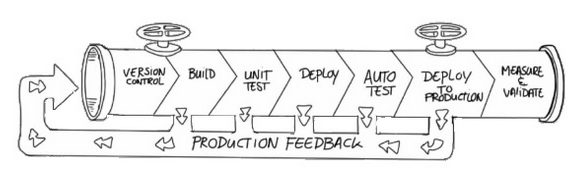

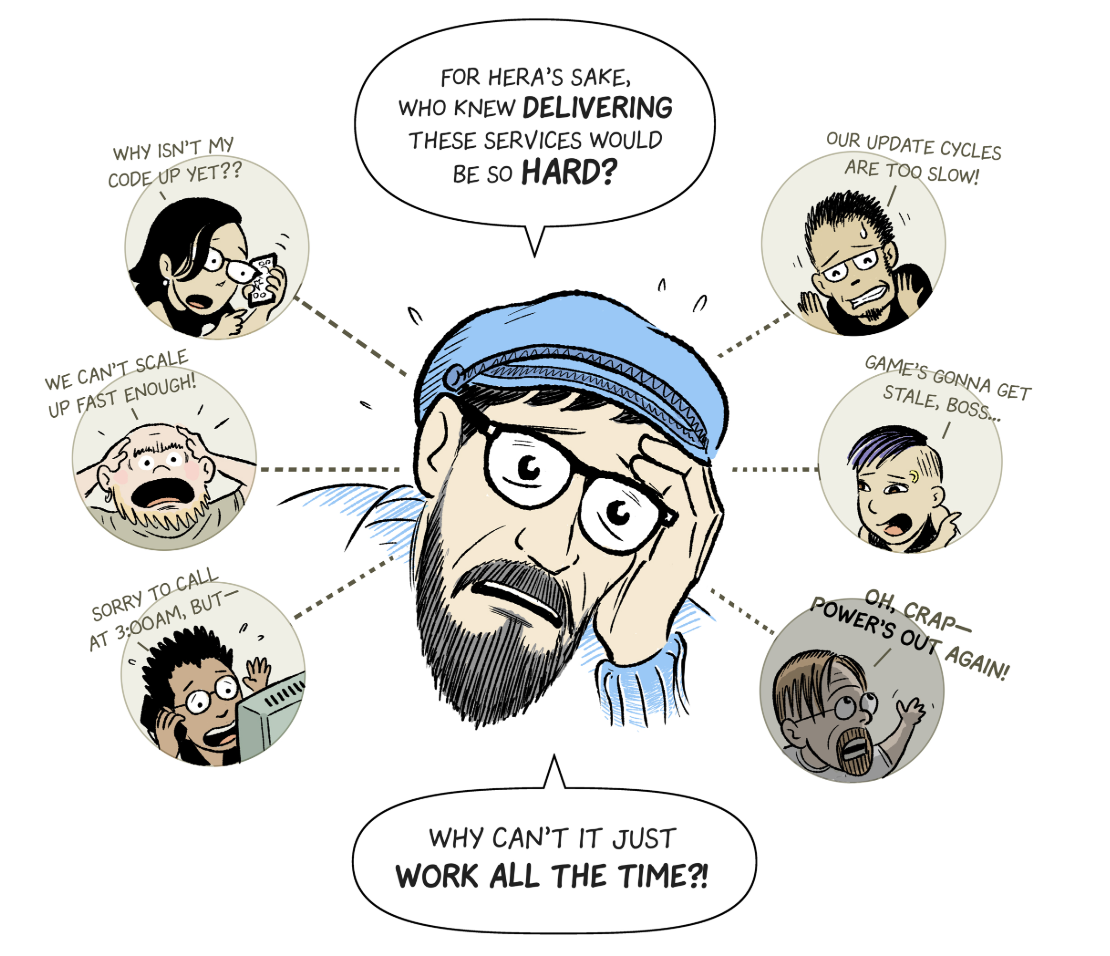

- designing / writing / testing / maintaining / running software (for highly available apps) involves collaboration between different groups of people and sometimes there are repetitive tasks and procedures that may take a lot of time or might have conflicts…

…Kubernetes is here to help us solve problems faced by System ops, DevOps teams, Developers, QA engineers, Site Reliability engineers… (read more at the k8s comic).

Abstractions – what should I remember?

Abstractions have helped computer science evolve. But there has always been the question: “how much abstraction is too much abstraction?”. The humorous Fundamental Theorem of Software Engineering states that:

“We can solve any problem by introducing an extra level of indirection”

and is often expanded by the clause:

“…except for the problem of too many levels of indirection”

implying that too many abstractions may create intrinsic complexity issues of their own.

Read more…

- https://loige.co/from-bare-metal-to-serverless/

- https://hackernoon.com/why-the-fuss-about-serverless-4370b1596da0

- https://www.martinfowler.com/articles/serverless.html

- https://www.slideshare.net/randybias/the-history-of-pets-vs-cattle-and-using-it-properly

- https://www.slideshare.net/spnewman/what-is-this-cloud-native-thing-anyway

- https://www.slideshare.net/spnewman/confusion-in-the-land-of-the-serverless

- https://www.slideshare.net/JorgeMorales124/build-and-run-applications-in-a-dockerless-kubernetes-world

- https://medium.com/@adriaandejonge/moving-from-docker-to-rkt-310dc9aec938

- https://coreos.com/rkt/docs/latest/rkt-vs-other-projects.html

- https://www.slideshare.net/jpetazzo/anatomy-of-a-container-namespaces-cgroups-some-filesystem-magic-linuxcon